We propose a novel angular velocity estimation method to increase the robustness of Simultaneous Localization And Mapping (SLAM) algorithms against gyroscope saturations induced by aggressive motions. Field robotics expose robots to various hazards, including steep terrains, landslides, and staircases, where substantial accelerations and angular velocities can occur if the robot loses stability and tumbles. These extreme motions can saturate sensor measurements, especially gyroscopes, which are the first sensors to become inoperative. While the structural integrity of the robot is at risk, the resilience of the SLAM framework is oftentimes given little consideration. Consequently, even if the robot is physically capable of continuing the mission, its operation will be compromised due to a corrupted representation of the world. Regarding this problem, we propose a way to estimate the angular velocity using accelerometers during extreme rotations caused by tumbling. We show that our method reduces the median localization error by 71.5 % in translation and 65.5 % in rotation and reduces the number of SLAM failures by 73.3 % on the collected data. We also propose the Tumbling-Induced Gyroscope Saturation (TIGS) dataset, which consists of outdoor experiments recording the motion of a lidar subject to angular velocities four times higher than other available datasets. The dataset is available online at https://github.com/norlab-ulaval/Norlab_wiki/wiki/TIGS-Dataset.

Contributions

- A novel method to estimate robot angular velocities during gyroscope saturation periods.

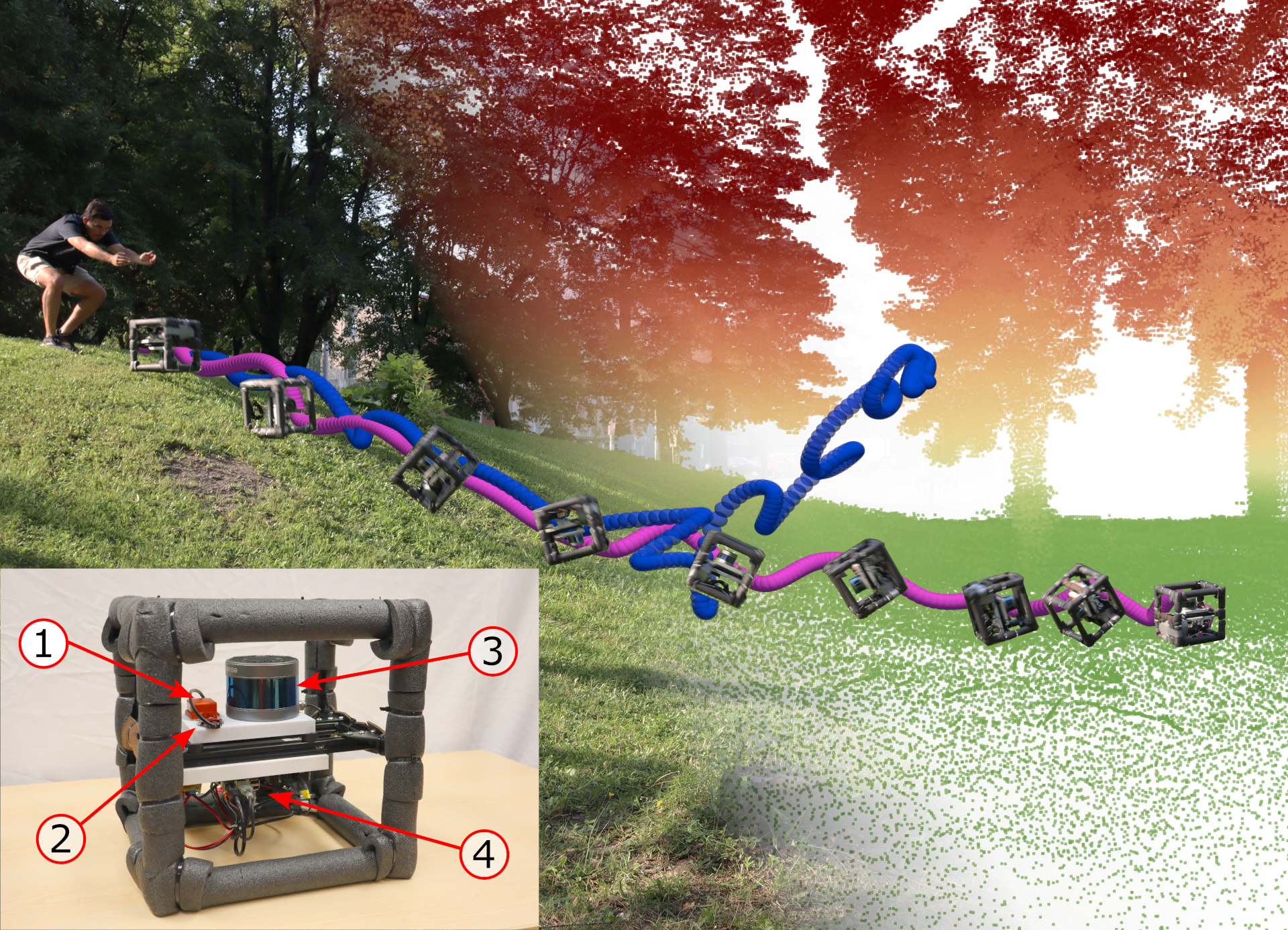

- The Tumbling-Induced Gyroscope Saturation (TIGS) dataset, consisting of 32 distinct runs of a custom perception rig tumbling down a steep hill, reaching angular velocities up to 18.6 rad/s.

Results in Images

The top figure shows our robot localization system tumbling down a steep hill. At the top of the figure is a picture of the event and the reconstructed point cloud. The blue trajectory represents a SLAM-estimated trajectory relying on raw gyroscope measurements. In pink is a similar trajectory, this time estimated relying on our angular velocity estimation approach. The rugged perception rig is shown in the bottom left. The numbers in the red circles correspond to (1) XSens MTi-30 IMU, (2) VectorNav VN-100 IMU, (3) RoboSense RS-16 lidar, and (4) Raspberry Pi 4. The saturation points of the MTi-30 and VN-100 gyroscopes are 7.85 rad/s and 34.9 rad/s respectively. Using this platform, we acquired scans at angular speeds up to 18.6 rad/s and 157.8 m/s2. When the perception rig tumbled down the hill, we recorded lidar scans and IMU measurements and used our method to build a map of the environment and localize within it. No prior knowledge of the environment is required by our method.

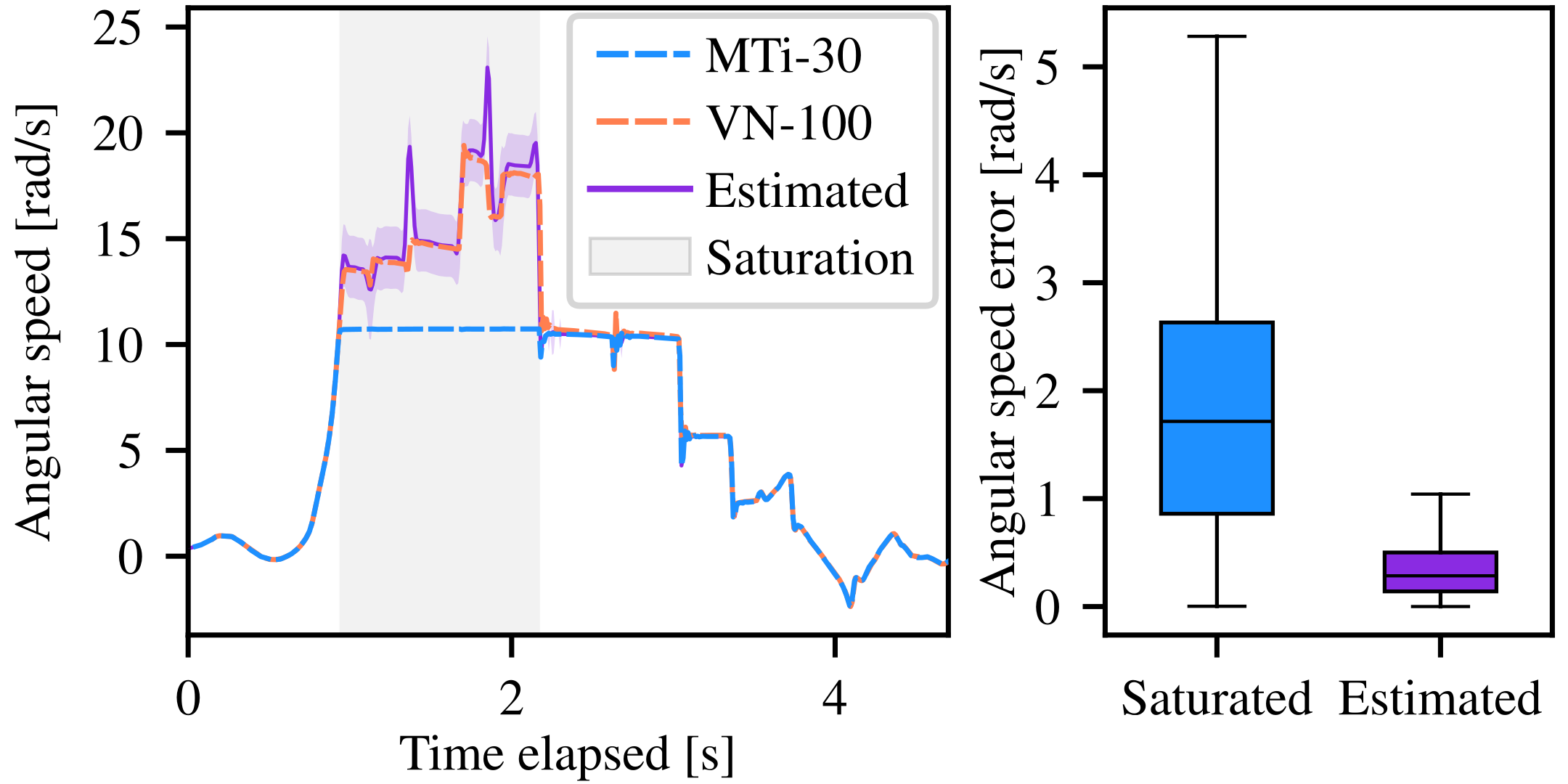

The bottom figure is split in two plots. The left plot shows an example of the angular speed through time for the saturated gyroscope axis of an IMU for a single run of our experiments. In dashed blue are the measurements from an MTi-30 gyroscope, in dashed orange are the measurements from a VN-100 gyroscope, and in purple are the angular speeds reconstructed with our method using MTi-30 gyroscope measurements. The purple-shaded area represents three standard deviations above and below the estimated speed. The right plot shows the error in angular speed without (in blue) and with (in purple) our method during saturation periods for all runs. Our approach reduces the angular velocity error median by 83.4 %, when compared to saturated gyroscope measurements. As expected, our angular velocity estimation approach significantly reduces angular velocity error under gyroscope saturations, especially for extreme values.

Reference

Deschênes, S.-P., Baril, D., Boxan, M., Laconte, J., Giguère, P., & Pomerleau, F. (2024). Saturation-Aware Angular Velocity Estimation: Extending the Robustness of SLAM to Aggressive Motions. Proceedings of the IEEE International Conference on Robotics and Automation (ICRA). https://doi.org/https://doi.org/10.48550/arXiv.2310.07844