Registration algorithms, such as Iterative Closest Point (ICP), have proven effective in mobile robot localization algorithms over the last decades. However, they are susceptible to failure when a robot sustains extreme velocities and accelerations. For example, this kind of motion can happen after a collision, causing a point cloud to be heavily skewed. While point cloud de-skewing methods have been explored in the past to increase localization and mapping accuracy, these methods still rely on highly accurate odometry systems or ideal navigation conditions. In this paper, we present a method taking into account the remaining motion uncertainties of the trajectory used to de-skew a point cloud along with the environment geometry to increase the robustness of current registration algorithms. We compare our method to three other solutions in a test bench producing 3D maps with peak accelerations of 200 m/s2 and 800 rad/s2. In these extreme scenarios, we demonstrate that our method decreases the error by 9.26 % in translation and by 21.84 % in rotation. The proposed method is generic enough to be integrated to many variants of weighted ICP without adaptation and supports localization robustness in harsher terrains.

Contributions

- A novel motion uncertainty-based point weighting model, allowing the robustness of registration algorithms to be improved when subject to high velocities and accelerations, requiring neither high-fidelity pose estimation nor prior knowledge of the environment.

- Experimental comparison of our proposed approach with state-of-the-art approaches on a dataset gathered at high velocities and accelerations.

- A highlight of the impact of point cloud skewing on the accuracy of localization and mapping.

Results in Images

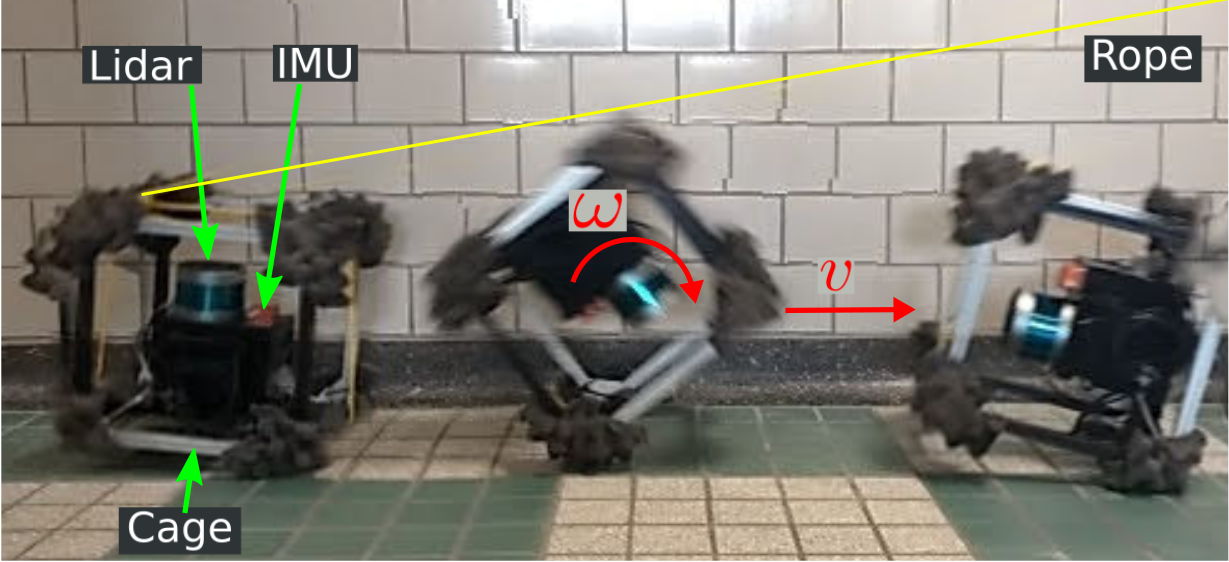

The top figure shows the experimental platform used for this work. A lidar and an IMU are mounted on a sensing platform, which is protected by a cage. A rope is attached to the platform and pulled on by an operator to induce high velocities and accelerations during lidar scans. Using this platform, we acquired scans at linear speeds and accelerations up to 3.5 m/s and 200 m/s2 and angular speeds and accelerations up to 11 rad/s and 800 rad/s2 in an indoor garage. When an operator pulled the rope, we recorded scans with our platform and used our method to build a map of the environment and localize within it. No prior knowledge of the environment is required by our method.

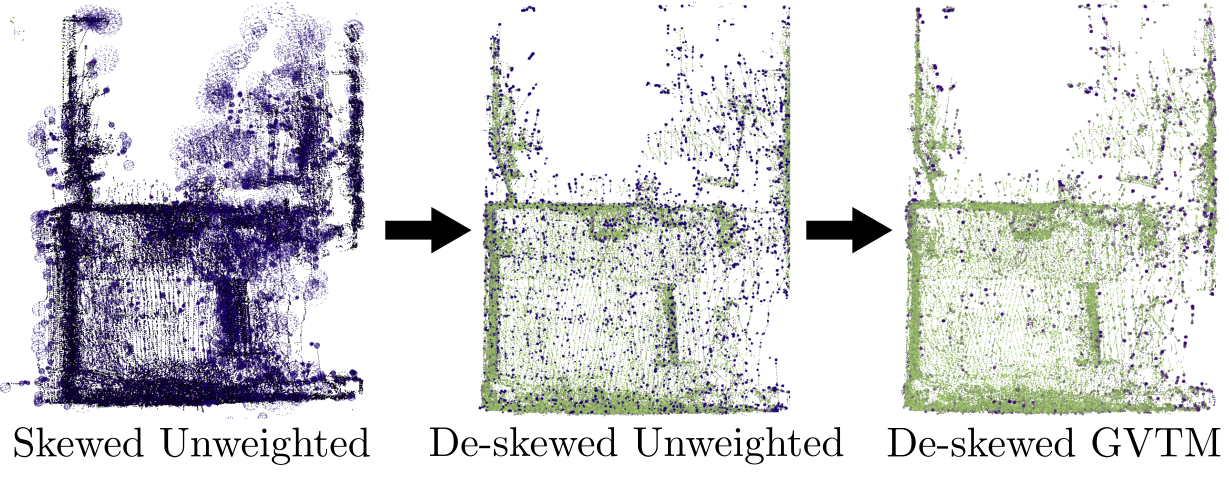

The bottom figure shows a top view of three 3D reconstructions of the environment built while applying peak accelerations up to 194.98 m/s2 on our sensing platform. All three maps were built using the same data, with our method only used for the rightmost map. Points in dark purple have high error and points in green have low error. The error was computed against a ground truth map built while moving slowly the sensors. De-skewing significantly impacts the mean error by reducing it from 21.95 cm to 7.27 cm. Further improvement is gained by using our method, leading to a mean error of 6.86 cm. For example, one can observe an improvement in accuracy on the left wall.

Reference

Deschênes, S.-P., Baril, D., Kubelka, V., Giguère, P., & Pomerleau, F. (2021). Lidar Scan Registration Robust to Extreme Motions. 2021 18th Conference on Robots and Vision (CRV). https://doi.org/10.1109/CRV52889.2021.00014